Content automation: Reddit pain points research

A marketing team needed a faster way to turn Reddit conversations into usable research. We built an n8n pipeline that finds high-signal threads, extracts full comment trees via Reddit OAuth, and compiles quotes into a Google Doc. An AI pass then clusters pain points and ranks them by business potential, preserving exact user language for copywriting.

Overview

We worked with a mid‑sized content marketing agency serving eCommerce and eLearning clients. Their content team relied heavily on Reddit to surface authentic pain points and conversation snippets for use in blog posts, ad copies and to create high converting lead magnets. They needed a system that was both reliable and auditable, reducing wasted hours on manual browsing. We built a workflow that searches for emotionally rich threads, extracts every comment, and turns them into a structured brief with categorized pain points and quotes.

The impact: reliable research inputs and clearer content opportunities without the manual slog.

Challenge

Most weeks started with someone skimming subreddits, bookmarking posts, and pasting fragments into a

doc. It was inconsistent—great threads were missed, quotes got de-contextualized, and nobody could tell

how representative any insight was. The team wasn’t short on data; they lacked a repeatable way to find,

structure, and reuse Reddit insights

Solution

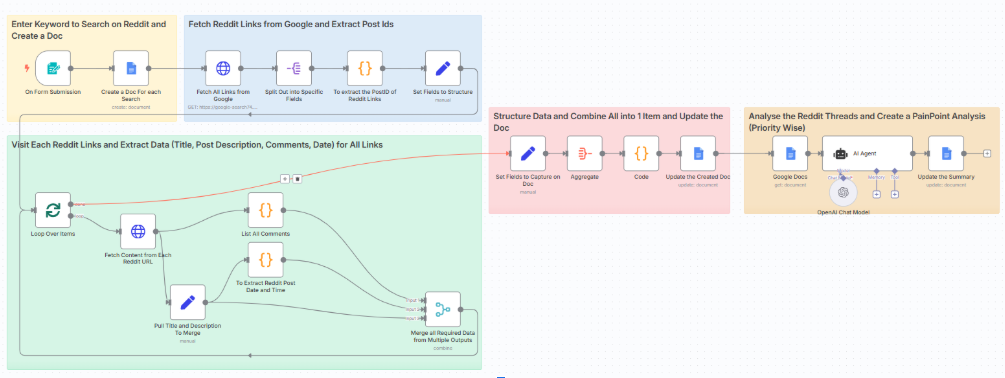

We reframed Reddit from “something to browse” into a corpus to structure. The n8n workflow:

- Collects a query from a simple form,

- Uses an advanced Google search to find comment-rich Reddit threads,

- Extracts full comment trees via Reddit OAuth

- Writes a clean evidence section into a Google Doc,

- And runs an AI analysis that categorizes pain points, preserves verbatim quotes, and ranks opportunities by business potential.

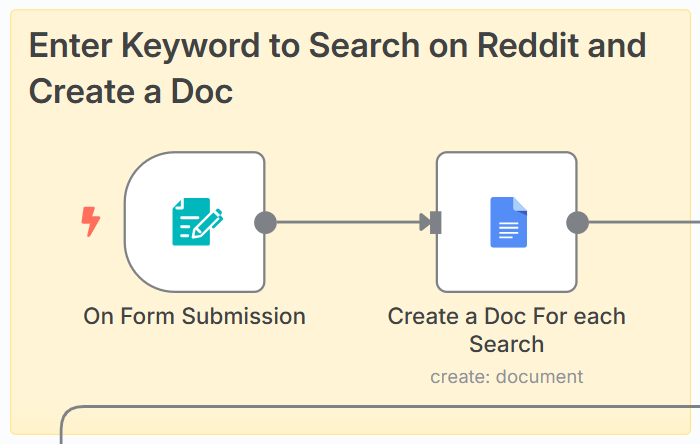

Phase 1 — Input & Document Setup

- What it does: Starts when a team member submits a research query (e.g. “email marketing challenges”). A Google Doc is automatically created and titled with the query.

- Why we built it this way: Each query produces its own workspace, keeping research runs auditable and avoiding the clutter of manual docs.

- Implementation: n8n’s Google Docs node provisions a doc in a predefined folder, ensuring versioning and permissions are consistent.

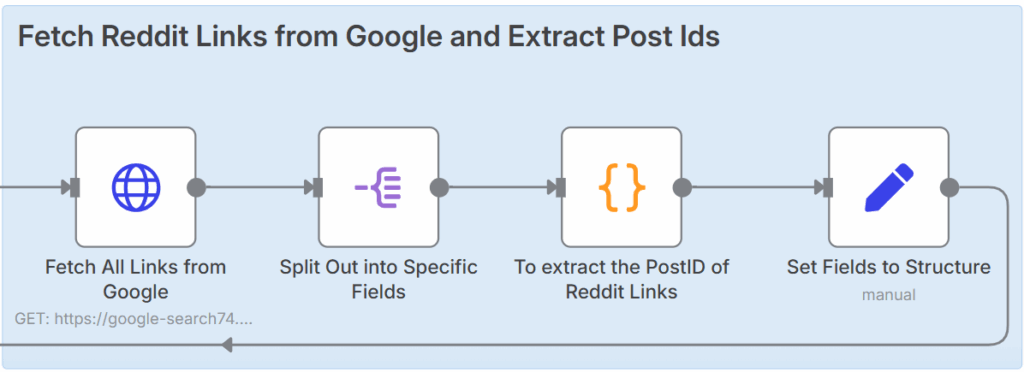

Phase 2 — Discovering Relevant Threads

- What it does: The workflow queries Google (via RapidAPI) with carefully engineered prompts that prioritize authentic, emotional phrasing (“I think…”, “my biggest struggle…”). It fetches Reddit thread URLs likely to contain rich, first-hand stories.

- Why we built it this way: Skimming subreddits misses gold. By anchoring the search on vulnerable phrasing, we capture conversations where people openly share pain points.

- Implementation: Regex extracts the [post Id] from each Reddit link, transforming it into an API endpoint. Fields like title, description, and doc ID are normalized for downstream steps.

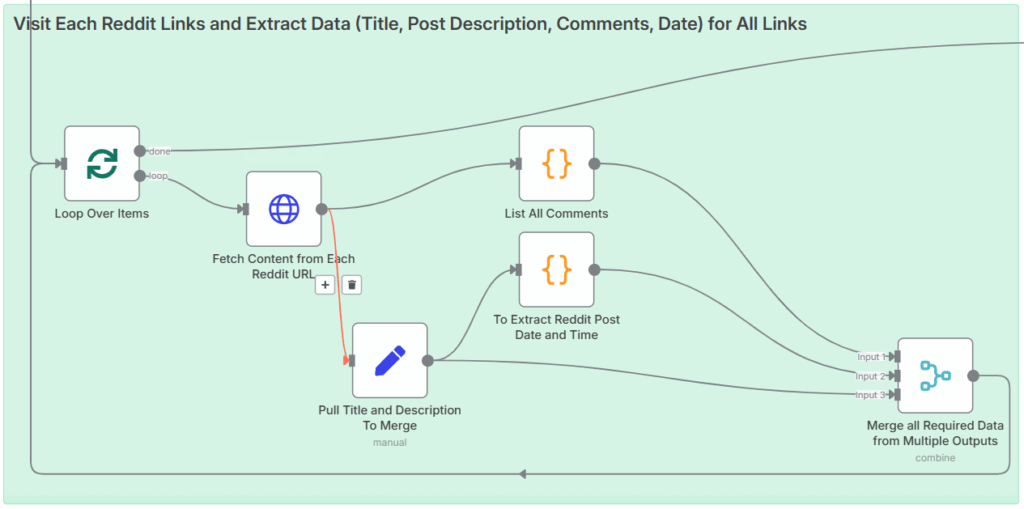

Phase 3 — Extracting Complete Conversations

- What it does: For each thread, the workflow calls the official Reddit API (OAuth-authenticated) and pulls the entire comment tree — not just top-level replies. Metadata like timestamps and comment counts are captured too

- Why we built it this way: Browsing comments selectively leads to bias. Full-tree extraction ensures no voices are skipped and gives analysts the full range of perspectives.

- Implementation: A recursive code node walks through nested [replies] . Another node converts [created_utc] into readable timestamps. All of this is merged with thread metadata for a clean dataset.

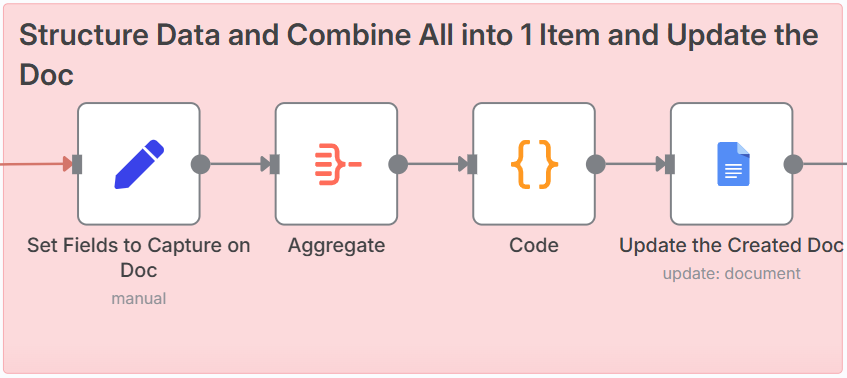

Phase 4 — Evidence Compilation

- What it does: The raw content — thread title, description, date, comment count, and every extracted comment — is written into the Google Doc, formatted for skimmability with dividers.

- Why we built it this way: By separating “evidence” from “analysis,” the workflow maintains transparency. Anyone can audit the exact comments behind each conclusion.

- Implementation: n8n aggregates items back into a single payload, formats text blocks via a code node, and appends them to the doc with a page break

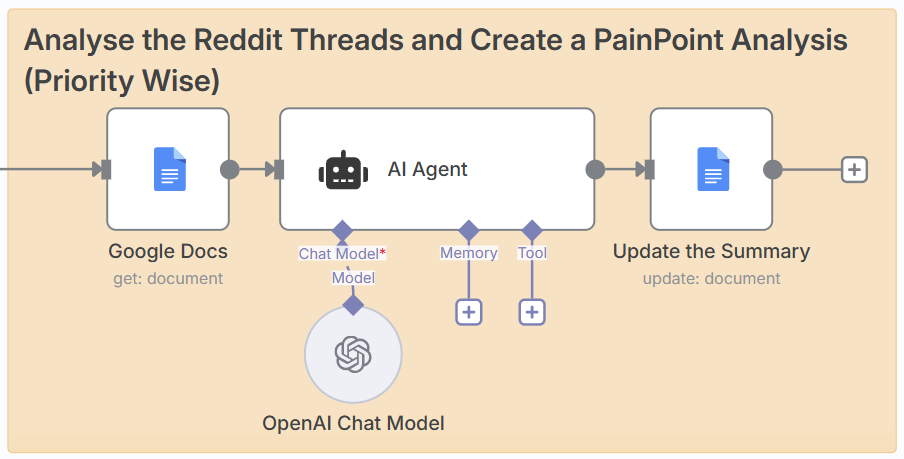

Phase 5 — AI-Powered Pain Point Analysis

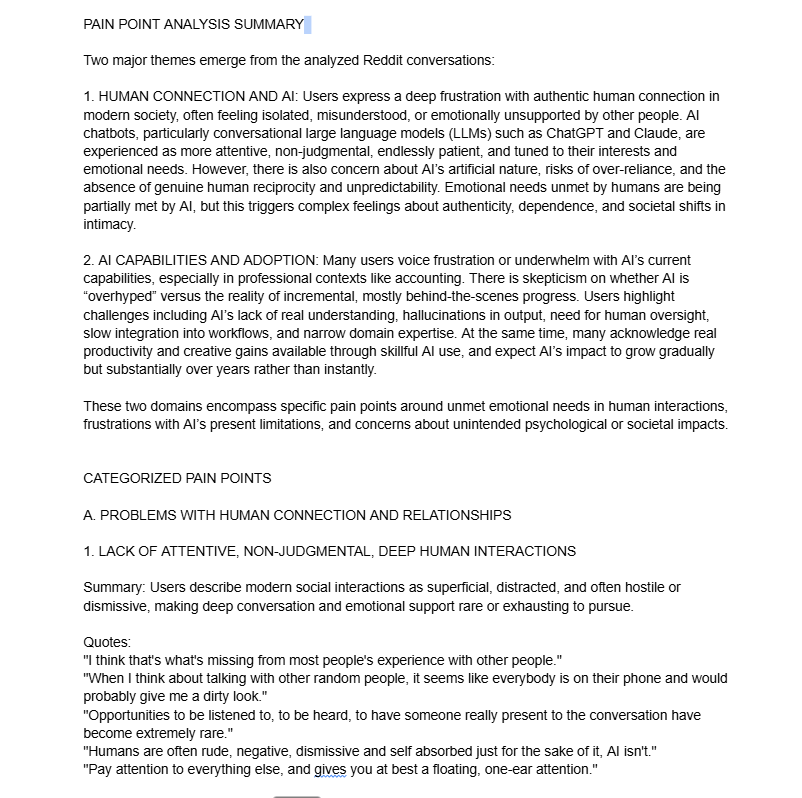

- What it does: An OpenAI-powered LangChain agent parses the doc and outputs a Pain Point Analysis: categories, verbatim quotes, and ranked opportunities. Output is styled with ALL-CAPS headers and plain text labels so it fits neatly into Google Docs

- Why we built it this way: Human analysts miss frequency signals and tend to paraphrase. The AI ensures pain points are clustered consistently and ranked by solvability, while preserving users’ own words for copywriting.

- Implementation: Prompt design balances extraction (don’t paraphrase) with evaluation (rank by intensity/ frequency). The AI’s text is inserted below the raw evidence, creating a self-contained research brief.

Pain Point Insights Research Report (Google Doc)

Results

What once took a full working day now takes under an hour. Each run captures 150–450 verbatim

comments (5–10× more than before), all stored in an auditable doc. This consistency and depth gave writers a sharper brief in half the time, rooted directly in user language. The key perspective shift—treat Reddit like a dataset, not a feed—turned messy conversations into reliable inputs for content ideation and offer testing. Time savings were ~85–90%, and every insight can now be traced back to its original quote.

Want a similar system??

We build pragmatic automation that saves time and increases signal.